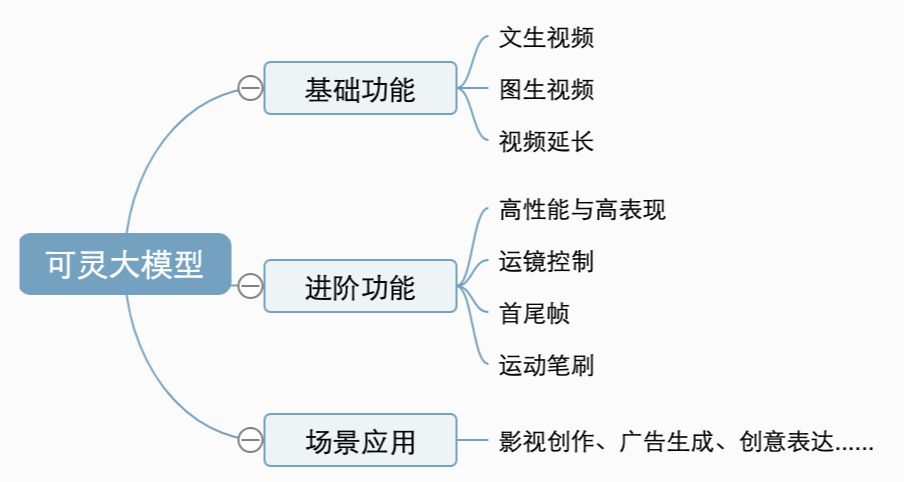

About Kling: Kling is a large model for video generation developed by Racer's large model team, which now supports multiple capabilities such as text-generated video, graph-generated video, video continuation, mirror control, first and last frames, etc., allowing users to easily and efficiently complete the creation of artistic videos.

Platform Links:https://www.1ai.net/12558.html

Basic Functions

Vincent Video

Input a piece of text, and Keyline's large model generates a 5s or 10s video based on the text expression, transforming the text into a video screen. Now supports "standard" and "high-quality" generation modes, the standard mode generates faster, high-quality mode better picture quality; "Kerin" also supports 16:9, 9:16 and 1:1 three kinds of aspect ratio, more diversified to meet the needs of everyone's video creation. It also supports 16:9, 9:16 and 1:1 aspect ratios to meet your video creation needs.

We know that "Prompt"" as the main interaction language of the Vincennes video model, will directly determine the video content returned by the model, therefore, how to use effective Prompt to complete the A!video creation is every creator wants to understand and learn, [Kerin] as the AI video model 2.0 new As the new life of AI video model 2.0, [Keling] is still in the process of selecting and updating, we need to continue to explore and realize the potential of Keling, so that we can play Keling and AI video better, we have prepared the Keling Prompt formula for your reference.

The core components of the above formula are Subject, Motion and Scene, which are the simplest and most basic units to describe a video frame. When we want to describe the subject and scene in more detail, we just need to maintain the integrity of the elements we want to appear in the Promot by listing multiple descriptor phrases, and "Kerin| will expand the prompts according to our expressions to generate a video that meets the expectations.

Such as "a big cat in the cafe to read books", we can increase the subject and the scene of the details of the description "a big cat wearing black-rimmed glasses in the cafe to read books, books on the table, there is a cup of coffee on the table, look at the hot air, next to the cafe window," so that the spirit of the generated picture will be more specific and controllable. will be more specific and controllable, if you want to add some lens language and light and shadow atmosphere, we can also try "lens medium shot, background defocus, ambient lighting, a big cat wearing black-rimmed glasses in the cafe reading a book, the book is placed on the table, there is also a cup of coffee on the table, bubbling hot, next to the cafe's descent, cinematic color grading ", so that the generated video texture will be further enhanced, it is possible to get beyond the expected results.

The meaning of the formula is to help us better describe the desired video image, we can also let our imagination run wild and not be limited by the formula, to freely and boldly communicate with "Korin", there may be more surprising results!

Some tips on how to use it:

Try to use simple words and sentence structures and avoid overly complex language; and

The content of the screen is as simple as possible and can be done in 5s to 10s ;)

It is easier to generate Chinese style and Chinese people by using words such as "Oriental mood, China, Asia".

Currently, video models are not sensitive to numbers, such as "10 puppies on the beach", and it's hard to keep the numbers consistent.

For split-screen scenes, you can use prompt: "4 cameras, spring, summer, fall and winter";

At this stage it is more difficult to generate complex physical motions, such as bouncing of balls and overhead throws.

Figure video

Input a picture, Keyline big model according to the picture understanding to generate 5s or 10s video, the picture will be transformed into a video screen; input a picture and text description, Keyline big model according to the text expression of the picture to generate a video, now supports "standard" and "high quality" two generation mode, as well as 16:9, 9:16 and 1:1 three frame ratio, more diversified to meet the needs of everyone's video creation. Now it supports "Standard" and "High Quality" modes, as well as 16:9, 9:16 and 1:1 aspect ratios, which are more diversified to meet your video creation needs.

Figure born video is currently the creator of the highest frequency of use of the function, this is because from the perspective of video creation, figure born video more controllable, creators can use the card in advance to generate a good picture for dynamic video generation, greatly reducing the cost of professional video creation and the threshold; and from the perspective of the video creativity, "can Ling" provides another creative platform, users can control the picture through the text of the subject of movement, such as the recent online popularity of "old photo resurrection", "hug with my childhood self", and netizens tease "eating mushroom illusion video! Users can control the movement of the subject in the picture through the text, such as the recent online explosion of "resurrection of old photos", "embrace with yourself when you were a child", and "mushrooms into penguins" which is teased by the netizens as "mushroom-eating hallucination video! and "Mushroom to Penguin" which was teased by netizens as a "mushroom-eating illusion video", etc., reflecting the attributes of "Keling! as a creative tool, providing unlimited possibilities for users to realize their creativity.

For graph generated video, controlling the motion of the subject in the image is central, and we provide you with the following formulas for reference:.

The most core components of the above formula are the subject and the motion, unlike the text-born video, the graph-born video already has a scene, so it only needs to describe the subject in the image and the motion that we want the subject to realize, if it involves more than one motion of more than one subject, it is enough to list them in order, and [Kerin] will expand the cue words according to our expression and understanding of the image picture and generate a video that meets the expectation.

If we want to "let the Mona Lisa in the painting wear sunglasses", when we only input "wear sunglasses", the model is more difficult to understand the instructions, so it is more likely to generate video through their own judgment, when "can spirit judge this is a painting, it will be more likely to generate a picture exhibition with the effect of lens operation. When "Keling" decides it is a painting, it is more likely to generate an exhibition with the effect of lens transportation, which is also the reason why it is easy to generate a still video for photo pictures (don't upload pictures with photo frames). Therefore, we need to let the model understand the instruction by describing the "subject + motion", such as ""Mona Lisa puts on the mirror with her hand", or "Mona Lisa puts on the sunglasses with her hand, and a light appears on her back" the model will be more responsive to the dry multiple subjects.

Similarly, the meaning of the formula had been in helping everyone to better use the Tousen video ability to improve video card draw, and more creativity still needs to be explored together to freely and boldly communicate with "Korin"!

Some tips:

Try to use simple words and sentence structures and avoid overly complex language; and

Motion conforms to the laws of physics, try to describe motion as it might occur in the picture;

The description differs greatly from the picture, which may cause the lens to switch; the

At this stage it is more difficult to generate complex physical motions, such as bouncing of balls and overhead throws.

Video Extension

The video after AI generation can be renewed for 4~5 seconds, supports multiple renewals (up to 3 minutes), and can be created by fine-tuning the cue words for video renewal.

The video extension function is located in the lower left corner of the Tab after the video is generated, there are two modes: "Auto Extension" and "Customized Creative Extension", "Auto Extension" means no need to input the Prompt, the model will continue the video according to the understanding of the video itself. Prompt" means that there is no need to input the Prompt, the model is based on the understanding of the video itself to continue the video, "customized creative extension" is that the user can control the extended video through the text, here the Prompt needs to be related to the original video, write the original video's "subject + motion Here, the Prompt needs to be related to the original video, stating the "subject + motion" of the original video, in order to try to realize that the extended video does not collapse, we provide you with the following formula for reference.

Some tips:

The Prompt in the video "Custom Creative Extension" needs to be consistent with the original video body, irrelevant text may cause the camera to switch.

Extension has a certain probability and may require multiple extensions to generate a video that meets expectations; the